The simple definition of big data analytics is the practice of handling large data to identify unknown relationships, hidden patterns, user choices, etc. All this information is tedious to find by conventional data mining or other technologies. So, the big data field is evolved with corresponding advanced analytical techniques for processing and analysing a large volume of data. One of the most popular big data tools for handling the above issues is Hadoop. From this article, you can acquire research perspectives of Big Data Hadoop Projects with current research directions, areas, fields, etc.!!!

Hadoop is the Java-assisted framework used for processing and storing a large volume of data. Majorly, data can be in any format as structured or unstructured. In this, data are saved in low-cost servers which execute as clustered form. Moreover, it also includes MapReduce programming for easy data accessibility and storage among name nodes and data nodes. Here, we have given you the primary differences between structured data and unstructured data for your reference.

Major 2 Types of Big Data

- Unstructured Data

- Metadata – Semantics

- Format – Binary Large Objects

- Integration Technology – Batch Processing

- Storage – Unorganized and Unmanaged Files

- Structured Data

- Metadata – Syntax

- Format – Columns and Rows

- Integration Technology – Traditional Data Mining (ETL)

- Storage – Database Management System (DBMS)

How Does Hadoop Work?

Initially, the user task is provided to Hadoop’s job-client system. Then, Hadoop specifies the locations of both the input and output files. Next, the associated java files are needed to incorporate the map and reduce the algorithm for efficient task allocation. After that, it needed to include the set of parameters in the job configuration.

Usually, cloud/grid/edge computing is adapted with Hadoop to provide services or applications to the users. As well, this cloud-Hadoop system has master-slave architecture where name nodes are referred to as slaves and data nodes referred to as masters.

Now, we can see the responsibilities of Hadoop in big data. Generally, numerous big data files are transferred among data nodes (i.e., clusters). So, these commodity servers need to purchase their resources. In this, metadata always notes down the events of Hadoop to track the required stored and processed data. Further, it also makes data analysis easier than conventional methods. Therefore, Hadoop is popularly known for both processing and storage of large data of big data Hadoop projects. Below, we have given you the key elements of Hadoop for execution.

What is the role of Hadoop in big data?

- Hadoop YARN – Cluster-based task and resource management

- HDFS – Big data storage system in default

- MapReduce – One of the Hadoop modules to implement Mapreduce based on application needs

- Hadoop Common – Utility collections to facilitate Hadoop modules

Although big data has tremendous advantages, it is also incorporated with some technical barriers due to unlimited data size. Also, it collects unorganized data from several data sources. And some of the main barriers are as follows:

What are the barriers to big data analytics?

- Hard to achieve high data quality

- Scalable data storage

- Low data complexity

- High transparency

- Low time delay

- High trust

- High data security and privacy

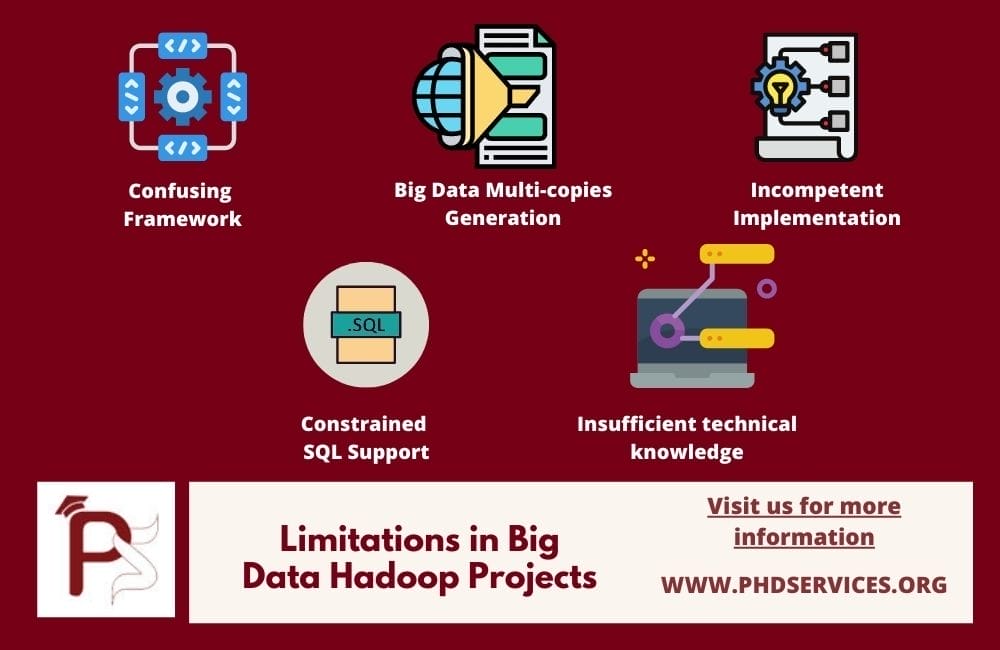

To the continuation of barriers, now we can see the constraints for developing big data Hadoop Projects. As mentioned earlier, Hadoop involves a large volume of unstructured data which needs more effort in general data processing, analyzing, and managing operations. Moreover, some of the other main research issues or constraints are given as follows,

Limitations of Big Data Hadoop Projects

- Confusing Framework

- Generally, MapReduce is a complicated task to perform which specifically in transformational logic

- To reduce the complexity, several attempts have been produced by open-source modules which utilize registered languages

- Big Data Multi-copies Generation

- In HDFS, data is duplicated over multiple times which at least get triple

- To maintain the sustainable performance, minimum of six copies need to be maintained via data locality

- Consequently, it expands the data size

- Incompetent Implementation

- Hadoop File Storage System does not follow cost-based strategies or query optimizers

- Therefore, clusters size in Hadoop increasing more

- Constrained SQL Support

- In the case of Hadoop, it is an open-source programming framework which executes on a distributed system

- As a result, it has insufficient SQL functions like analytical groupings, subqueries, and many more

- Insufficient technical knowledge

- Hadoop has inconsistent data mining libraries for processing big data

- So, it is required to upgrade the knowledge in developing distributed Mapreduce algorithm

Currently, our research team has undergone deep study on big data Hadoop. From this, we have found several interesting research areas that comprise numerous creative research ideas. Here, we have listed only a few of the important research areas. Beyond this list of areas, we also support you in other emerging research areas. Once you tell your interested areas, we provide countless novel research ideas/topics for big data Hadoop projects.

What are the Best Research Ideas in big data?

- Expandable Big Data Storage Systems

- Advanced Tools and Technologies for Big Data Processing

- Big Data Processing using Mining Tools and Technologies

- Assurance of Big Data Privacy and Security

- Flexible System Architectures for Parallel Data Processing

Next, we can see the list of growing techniques and algorithms for recent big data scientific issues. While dealing with a large amount of data in real-world implementation, different challenges will happen. Therefore, it is essential to overcome such issues through efficient research solutions.

Our developers have well-equipped knowledge to handle different advanced techniques, algorithms, and functions. In specific, we are talented to develop hybrid and new technologies to crack any sorts of complexities. For your reference, here, we have given you a few important techniques followed by significant algorithms and methods.

What are the techniques used for big data problems?

- Problems: Inconsistent classification and Inaccurate or Incomplete Training data

- Techniques

- Data Preprocessing

- Deep Learning

- Fuzzy Logic Theory

- Problems: Learning from low-value data or unlabeled data or low veracity

- Techniques

- Fuzzy Logic Theory

- Active Learning

- Problems: Greater Volume of Data / High Dimensionality

- Techniques

- Distributed Learning

- Clustering

- Feature Selection

We ensure you that we suggest only appropriate research solutions for your handpicked research problems. Since we have certain conditions to handpick solutions for performing any kind of operation. Assume that you are choosing “scheduling” as an example, then you need to increase throughput and CPU utilization as we decrease waiting time, response time, and turnaround time. Similarly, other techniques or algorithms are selected along with project requirements.

Big Data Algorithms List

- Data Scheduling Techniques (Static and Dynamic)

- Fairness Aware Scheduling

- Size-based Scheduling

- Profile-based Scheduling

- Rate Monotonic

- Decentralized Scheduling

- Rule-based Scheduling

- Task Aware Scheduling

- Round Robin

- Budget Driven Scheduling

- Earliest Deadline First

- Dynamic Scheduling

- Deadline Aware Scheduling

- Fair, Dynamic Priority Johnson’s, Greedy, FCFS, Knapsack

- Data Locality Aware Task Scheduling

- Shared Input Policy-assisted Job Scheduling

- Data Anonymization Techniques

- Generalization

- Bucketization

- Slicing along with Suppression

- Multiple-set based Generalization

- One Attribute per Column Slicing

- MapReduce Techniques

- Parallel Genetic Procedure

- Data Redistribution Procedure

- Data Skew Mitigation Techniques

- LEEN Mitigation

- SkewReduce Mitigation

- SkewTune Mitigation

- LIBRA Mitigation

In addition, we have also given you the important development tools and technologies for Big Data Hadoop Projects. Our developers have continuous long-lasting practice on developing different big data Hadoop applications. So, we are familiar with all advance and emerging implementation tools. Among so many tools, one should choose the best-fitting tools based on project needs. We assure you that we guide you to handpick suitable tools, platform, programming language, and dataset for your handpicked project topic.

What is the Big Data Analytics Tools?

- Streaming

- Storm

- Infosphere

- Spark

- MapReduce

- Cassandra

- Pig

- Chukwa

- Mahout

- Hive

- Batch Streaming Processing

- Hama

- Pregel

- BSPLib

- Giraph

- Bulk Synchronous Parallel ML

Moreover, we have also given you some growing research fields of big data. These fields are enriched with new technological advancements in big data. Also, these growing big data fields are identified as great demanded research areas by our experts. Our ultimate goal is to provide you with the best research topic with development support. So, connect with us to know your pearl of big data Hadoop projects research topic from your interesting research area before someone attempts to choose.

Evolving Technologies in Big Data Analytics

- Cloud Computing

- Mainly used for improving portable services, storage, and databases

- Support dynamic big data solutions deployment

- Distributed Computing

- Mainly used for achieving extensive data storage (petabytes) in high performance

- Support big data computing system for direct data accessibility

- Big Data Analytics

- Mainly signifies multi-stage data analytics method for getting better results

- Support data acquisition, planning, analyzing, processing, and visualizing

- Mobile Devices

- Mainly signifies the computing devices to generate big data as well as receive multiple types of data from other big data systems

- In-Memory Applications

- Mainly used for enhancing the database performance for extensive storage

- Solid-state Driven Flash Memory

- Mainly signifies the random data-access speed of about < 0.1 milliseconds

- Further, it may use large flash memory for high data-access speed

Furthermore, we have also given you the current research directions of the big data Hadoop. These areas are handpicked by our field experts based on current scholars’ research interests. We guarantee you that all these areas are moving towards the future technologies of big data and Hadoop. So, the demands of these areas are increasing more gradually. Interact with us, to know the best research topics in the following areas.

Big Data Research Challenges and Future Directions

- Innovative Platforms / Tools for Big Data Deployment

- Traditional tools are not efficient to process the large volume of data

- Therefore, many research interested people are involving developing modern big data tools and frameworks

- Enhanced Techniques for Data Diagnosis

- Collect the required data based on certain conditions and take effective decisions based on the conditions.

- Advanced Algorithms for Data Viewing

- Developing robust algorithms to achieve accurate outcomes

- Improve visualizing particular data from the bulk of random data

- Refined Techniques for Data Mining

- Improve mining strategies to collect data from multiple sources in a distributed environment

- Advanced algorithm to fix the problems of data collections in multiple platforms

- Improved Techniques for Natural Language Processing

- Process the big data by applying NLP techniques to detect the sentimental emotions and feelings

On the whole, we are here to support you in enhancing your research thoughts, converting handpicked research ideas into practical developments. Further, we also support you to convert whole research and project works into the fine-tuned manuscript. In other words, we will assist you in every phase of the Big Data Hadoop Projects under our expert’s supervision. So, utilize this chance to shine in your research profession.